Appetizer

Ahoy fellow Cyberscouts! It's been a couple of weeks since our last encounter when I introduced an example of an applied AIMOD2 hunt mission, using Citrix CVE-2023-3519 as target.

What have I been doing since then you ask? Well, I've been building Active Cyber Defence capability at a global scale, by identifying the right structures and approaches that an organization can take to develop proactive threat detection and countermeasures.

Aside from that, I've been pondering hard around the question of "why" in threat detection. Why hunting for this threat and not that threat? Why create a detection for x, y, and z, as opposed to b, c, and d?

The perils of pondering this question for too long is that you may end up in complete relativism. But if you are curious enough, have tolerance for uncertainty, and avoid collapsing the space of possibilities too soon, there are treasures to be found. It is all about becoming a navigator of these complex times.

My reflection efforts have produced a blog post series around "The Problem of Why: Threat-Informed Prioritization in Hunting and Detection Engineering". These are not published yet, but if you stay tuned, your email client will surely let you know, unless, of course, you have flagged the Tales of a CyberScout as SPAM, for which I wouldn't blame you, to be honest 😉.

Today though is time for us to delve into a different topic. This epistle has been sitting in my Obsidian client for long enough and I believe it is time to finally publish it: how does a threat hunting pipeline look like?

The Intel Illusion

Most threat hunting and detection engineering models are quick to state that threat hunting should be "threat-led". What they don't state is what is that supposed to mean.

Most people assume that leveraging threat intelligence for hunting and detection engineering simply means consuming feeds full of IOCs. I am not sure what dark magic is operated to transform that into hunting hypotheses or missions, but I'm sure there are archmages that can do that out there (perhaps some extreme data analytics comes to the rescue).

Some people think that leveraging intel for hunting and detection engineering is all about understanding external threat actor behaviour. That is tactics, techniques, and procedures that specific threat groups like Cozy Bear (APT29) exhibit in the "wild", which are captured by the big Cyber Consultancies and TI companies out there, then disseminated amongst the commoners.

Some other people (yeah, my maths here are pretty loose: some + some = still some), the very few, realize something crucial to any serious threat hunting program: EVERYTHING IS INTEL.

Let's say you run internal penetration tests and the testers find holes in your applications, big deal uh? What do you do with that information? You probably pass it on to a bunch of happy devs (uh... yeah, because those guys are mega happy about this news surely) who will go on their merry way and fix the vulnerability. Internal Patch Tuesdays.

And what else? Is that all you do with such an important piece of information? What about:

- understanding whether there are any threat actors that have been associated with exploiting the same bugs

- running OSINT on the vulnerability type to understand correlations and connections to other bugs in a typical attack chain

- passing that info to your operational blue teams to perform retrohunts, in order to understand whether anyone else has been able to exploit the same vulnerability in the past

- collating this information into an internal vulnerability database to understand engineering patterns and how to improve them

So you hire a Red Team to come and do an assessment of your security controls end-to-end. They setup a phishing structure, phish one of your employees with weaponized docos or credential harvesting. Deploy a C2, run BloodHound, identify attack vectors, move laterally, escalate privileges, obtain domain admin, exfiltrate data and achieve objectives. They completely, utterly pwnd you for the 5th time and everybody is raising risks left, right, and center.

What do you now do with that information? Raise a risk, fix things and job done?

What about, well... pretty much everything I just said for the pentest case, and then add:

- performing attack path modelling by leveraging Mitre Attack Flow

- tracking adversarial emulation engagements as you would any other threat actor (even when they are not)

- break down attack chain information to understand cyber deception opportunities

And the list can go on.

The point is, if your notion of "intel" is merely reduced to external threat actors, then you are missing out on fantastic sources of intel right inside your perimeter.

Don't get me wrong, intel that pertains to external threat actors is hugely relevant to blue teams, it belongs to the domain of predictive analytics and projected attack vectors.

The awareness this brings to the business is core to any serious cyber security program. But by limiting your hunt pipeline to external sources of information, you are blinded to the potential of derived analytics from internal sources, which play an essential role in probing and responding to internal security controls and their breaches.

The Active Defence Threat Hunting Pipeline

So how do we evolve past the limitations of the abovementioned shortcomings? My small proposition is that we shift our mindset 360°, not in a circle but in a spiral.

I always liked spirals more than circles. A spiral is a combination of circular motion and linear motion. The circular motion is what allows you to make the turn, while the linear motion allows you to progress along the spiral path. Spirals don't just represent cycles, they also represent quantum leaps, evolutions, dislocations.

Let's begin by assuming that EVERYTHING in our environment IS INTEL. It can be in a less refined or more refined state, more or less interconnected, more or less clustered, more or less coherent, but it can be considered a source of threat intelligence, in principle. We can have our classical philosophical debates in later epistles, I am aware that intelligence involves more than merely disconnected data points.

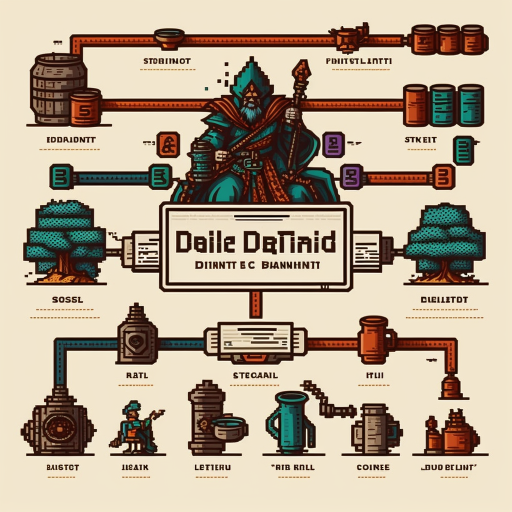

To work on a threat hunting pipeline, we simply need to draw from AIMOD2 Framework CAPEO model. It lays out the basic foundations not just of a hunt mission, but of an approach to the praxis of threat intelligence operationalization. In other words: the practice of deriving actionability from contextually relevant threat intel. Our initial threat hunting pipeline based on CAPEO would then look like this:

If we overlay the potential sources of intel that make up a rich pipeline we would then have to modify the funnel to this:

We can provide details on what each phase of the pipeline entails, by breaking the phases down into specific tasks:

Probably that reads too small for you 👅, so let's break down each phase into its core tasks.

Threat Hunt Pipeline High Level Process

First of all, bear in mind: each phase in the pipeline deserves its own process. We are only describing the high level aspects here. It is up to you to define the detailed process that fits your business model, industry vertical, delivery strategy, etc.

Why would I want to tell you what to do here? (I wouldn't, at least not for free 😉)

Collect

Ensure that threat information is collected into one or several platforms

Threat information will be produced by different teams, at various levels of your organization. It is a nice thought to think all of it will be centralized in a single pane of glass, but this is usually not the case.

From the active defence threat hunting function perspective, you need to ensure that you have identified the platforms where the various functions and teams in your organization store data that has any type of threat-informed information coded within.

As long as you have access to the source data, you can then further refine it down the hunt pipeline, transforming fragmented and unrelated data into coherent clusters of actionable information, following DAIKI principles (Data, Information, Knowledge, Insight)

Ensure communication channels exist between source teams and the hunt function

Your active defence threat hunting function won't get too far if it's not continuously absorbing organizational context and synthesizing it into actionable information.

The active defence threat hunting function needs to embed itself in the various communication channels that exist between source teams producing threat-informed data.

You need to have a hunt emissary of sorts in relevant forums and make the right agreements with key stakeholders to ensure contextually relevant information flows into the hunt pipeline.

Ensure teams producing that information make it available to the hunt function

Similar to the above, ensure that threat-informed data is readily made available in some shape or form, the further along the DAIKI semantic chain, the better. That is, the more refined, structured, and relevant is the information, the easier it's going to be to digest it in the hunt pipeline and quickly operationalize it into actionable hunt missions.

Analyse

Validate and triage information

As information flows into the hunt pipeline, you need to ensure that you have a process for validating the relevancy and quality of this information.

Not all information is made the same. Information from a full-blown threat report like CISA Advisory for the Exploitation of Citrix CVE-2023-3519 provides plenty of insights that are easy to digest and convert into hunt mission objectives and runbooks.

Information coming from pentest engagements where many exploitable vulnerabilities have been found in a few applications does not provide the same level of actionability. You need to perform an analysis of this type of information, identify patterns, understand the impact, and enrich this with MITRE ATT&CK TTPs and linked threat actors that leverage similar exploit vectors.

In any case, you need to triage incoming threat-informed data and prioritize what will make it further down the hunt pipeline. What deserves to be further understood and analysed, and what can we drop?

Perform use-case feasibility assessment

Once you have prioritized your information, you need to evaluate what is involved in converting the potential energy of that information into kinetic energy. At the risk of losing half my audience here because I am not using "businessy" jargon, let me rephrase the above as effectively harnessing the latent value of collected data in terms of its actionability.

Your feasibility assessment process needs to truly assess what data is material for hunt missions. This involves defining exclusion criteria and prioritization criteria based on attributes like:

- what is the freshness of the threat information

- how does it link to your Crown Jewels (because you have obviously identified them I assume? 🤭)

- what is the exploitability score of any vulnerabilities identified in the use-case, for example, by implementing EPSS (exploit prediction scoring system)

- does the threat-informed data relate to past or present incidents?

- are there prior hunt missions that could be enriched with this information, in order to run new iterations of them?

- does the potential use-case align with strategical priorities defined by your business unit or cyber operations function?

- does the information relate to external threat actors that regularly target your industry vertical?

There are many more considerations to make, but you get the point.

Identify requirements

In Cyber Threat Intelligence, you normally have to identify collection requirements at the beginning of your threat intel lifecycle. This is fine when you are the producer of information for downstream teams that consume it.

In a threat hunting pipeline, your requirement identification phase is kind of an extension of the feasibility assessment. It involves understanding what are the potential use-cases for the information that has made it thus far down the pipeline.

Requirement identification is also concerned with understanding what the rest of the pipeline looks like, whether it makes sense to add a new hunt idea to the backlog, and whether this is feasible with available resources.

This subsection is added for clarity, but it is really part of Feasibility Assessment.

Gather business context

Once you have identified a good use-case, which will be converted into a hunt mission further downstream, you have to gather further contextual awareness:

- is there documentation that relates to your potential hunt mission

- what are the key stakeholders that are linked to the threat, vulnerability, or technology stack

- is there a need to involve other teams or business functions to understand the topic better and give your hunters the best chance of success?

Business context gathering can also be collapsed into the Feasibility Assessment process.

Plan

Designate Hunt Mission Lead

Once the information has been captured, refined, and understood, it is time to designate a threat hunt mission lead, who will take over the rest of the pipeline, and ensure the use-case is converted into a hunt mission.

The hunt mission lead is accountable for the mission delivery end-to-end.

Determine Hunting Squad Composition

The hunt mission lead needs to recruit hunters from the pool, in order to prepare the hunt mission for execution.

Select Hunt Mission Type(s)

There are many hunt mission types, threat hunters would have made best efforts to enrich and refine information in the best way possible, but there is always some more work that the hunt squad will have to perform.

The mission type you choose is based on the state of the information along the DAIKI chain and what is the end goal of the mission. Do we want to simply begin to understand the data spectrum, what shape it has, the fields, and details of logs? then perhaps an exploratory data analysis mission is the best. Do we have threat information that is very relevant to our business and technology stack, but lacks the detailed factor of threat reports? then perhaps a hypothesis-based operation is the best mission type.

Layout Hunt Mission

The threat hunt mission lead needs to lay out the structure of the hunt mission, including timeframes, scope, and runbook.

Execute

Run Hunt Mission

At this point, the Hunt Pipeline Process hands over to the specific process that governs a particular mission type.

The hunt mission is run according to the stipulated process. The hunt mission process should include an outcomes phase where results are communicated to stakeholders, playbooks and reports are written, and the workload is updated.

Outcomes

Collect feedback on mission outcomes

It is important that the different stakeholders provide feedback around the relevancy, accuracy, and value-add of selected hunt missions.

The Active Defence Threat Hunting Lead needs to meet regularly with mission leads to understand enablers, blockers, gaps and issues.

Update Metrics

Whatever metrics you use to measure the flow and quality of your hunt program need to be continuously updated to ensure they reflect real stats.

Ideally, this process should be automated and DevOpsified, because why not?

Identify Continuous Improvement Opportunities

Continuous Service Improvement is one of the key aspects of ITIL. It is important to collect feedback from mission leads, threat hunters, and stakeholders to understand where are the opportunities for improvement.

We are not playing a finite game here but an infinite game. Continuous improvement is just part of the game.

Did you enjoy this?

And that's about it today folks, I have probably spoken long enough for a Sunday afternoon 😉

I have no desire to share these ideas with an audience that has no interest whatsoever in what we are building here.

And what we are building my friends is a pathway to active cyber defence and beyond, into adaptive cyber defence. That's why I share this with my subscribers!

I want us all to be part of a higher collective intelligence and collaborate to develop creative paths and a healthy connective tissue of like-minded humans.

Hopefully, we can help businesses protect themselves and improve the quality of life of people around the world, whilst hoping to discover and practice better ways to take care of our planet.

Enjoy your day and I hope you all have a great week ahead!

Cheers,

Diego The Cyberscout